Ways to Ensure Reliable & Ethical Use of Generative AI

Generative AI transforms businesses’ operations, speeding up workflows, minimizing errors through enhanced data management, and enabling leaders to make informed strategic decisions. Recent statistics from Statista highlight a significant trend: the adoption of generative AI in the corporate sector surged past 20% in 2022, with projections suggesting a leap to nearly 50% by 2025. This rapid integration signifies a growing recognition among executives, staff, and consumers of the technology’s potential to drive innovation and efficiency.

As we navigate this digital revolution, we must acknowledge the dual-edged nature of generative AI. While its benefits are undeniable, ensuring its use reliably and ethically is paramount for sustaining trust and integrity in technological advancements. Misuse or mismanagement of AI can lead to significant consequences, underscoring the need for deliberate and thoughtful implementation.

Also Read This: Technology Evolution: Top Tools & Trends Shaping 2024

This backdrop sets the stage for a critical conversation on the responsible use of generative AI. As we embrace these tools to reshape the future of work, the importance of establishing best practices and guidelines cannot be overstated. The following article explores five fundamental techniques to ensure the reliable and honest application of generative AI, aiming to foster a culture of accountability and transparency in its deployment. Through these measures, we can harness the full potential of AI to benefit businesses and society at large while safeguarding against the pitfalls of rapid technological change.

Transparency in AI Operations

Transparency is key in generative AI. Users should understand how AI systems make decisions or create content. This involves disclosing the nature of the algorithms, data sources used, and any potential biases. Transparency ensures users know how AI-generated content or decisions are derived, fostering trust and reliability.

Ethical Data Usage

The backbone of any AI system is data. Ethical sourcing and use of data are paramount. This means obtaining data through fair and legal means, respecting privacy rights, and ensuring data diversity to avoid biases. Data used in training AI should represent a wide range of demographics to prevent skewed or unfair outcomes.

Regular Auditing and Updates

Like any system, generative AI is not infallible. Regular auditing for accuracy, biases, and ethical use is essential. This includes updating the AI algorithms as new data and ethical guidelines emerge. Continuous monitoring and improvement help maintain the system’s reliability and trustworthiness.

User Education & Awareness

Educating users about the capabilities and limitations of generative AI is necessary. This includes informing users about the possibility of errors, the nature of AI-generated content, and how to differentiate it from human-generated content. An informed user base is better equipped to use AI responsibly.

Implementing Robust Security Measures

Security is a significant concern in the digital world, and AI systems are no exception. Implementing robust security protocols to protect AI systems from unauthorized access and tampering is crucial. This prevents misuse of AI for fraudulent or harmful purposes.

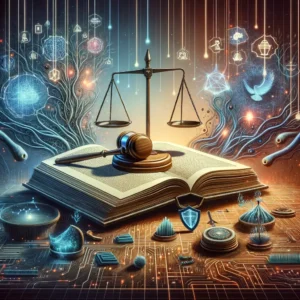

Adhering to Legal & Ethical Standards

Compliance with legal and ethical standards is non-negotiable. This means respecting copyright laws, avoiding plagiarism, and ensuring that AI-generated content does not propagate misinformation or harmful stereotypes. Legal compliance not only protects against liabilities but also builds public trust.

Encouraging Responsible Usage

Promoting a culture of responsibility among users and developers is crucial. This involves setting guidelines for ethical use and discouraging manipulative or harmful applications of AI. A community committed to responsible use will lead to more positive outcomes and public perception.

Openness to Feedback & Criticism

Being open to feedback and criticism allows for the evolution and improvement of AI systems. Encouraging user feedback and engaging with critics can provide valuable insights into how AI can be used more responsibly and effectively.

Collaborative Development

Collaborative development with experts from various fields can enhance AI’s reliability and ethical use. This includes working with ethicists, sociologists, and legal experts to ensure a well-rounded approach to AI development.

Promoting Balance Between Innovation and Ethics

Finally, striking a balance between innovation and ethical considerations is vital. While pushing the boundaries of what AI can achieve, it’s equally important to consider the ethical implications of these advancements.

Conclusion

The dependable and honest use of generative AI requires transparency, ethical practices, security, legal compliance, and continuous learning. By adhering to these principles, we can harness the full potential of AI while maintaining trust and integrity in this digital age.